What does the patent behind Netflix’s acquisition of Ben Affleck’s AI company actually do?

I read all 68 pages of the patent behind Ben Affleck’s stealth AI start-up that Netflix just bought in order to understand exactly how the system works (and so you don't have to).

This article is part of my ‘Big Ideas’ series, in which I explore the evolving landscape of the film industry. Each instalment combines data, research, and analysis to go deep on a trend, idea or case study to reshape the film business over the next decade.

I have written a few times about how patents can be an excellent way to see which technologies will soon affect / improve / disrupt the industry.

This has led a number of readers to reach out and ask more about the technology in Ben Affleck’s AI company that would make Netflix want to buy it.

Today I’m going to go through the background, the patent and what it might mean for the film business in the near future.

If you want more context on today’s topics, here are a few recent articles which may help:

What Netflix’s patents reveal about the future of watching movies

What Amazon’s patent filings reveal about how filmmaking may change in the future

How AI tools are already changing the jobs of film professionals today

Netflix owns some pretty strange trademarks, and they reveal a lot about the company

The background - Netflix bought Ben Affleck’s AI filmmaking company

Netflix announced a few days ago that it has acquired InterPositive, an artificial-intelligence filmmaking company founded by Ben Affleck.

InterPositive was founded in 2022 and operated largely in “stealth mode” under the corporate entity Fin Bone LLC. The company says it’s developing tools intended to support filmmaking rather than replace it, focusing on production workflows such as relighting scenes, removing wires from stunt shots, correcting continuity issues and enhancing backgrounds in post-production.

The company itself is fairly small, with just 16 engineers, researchers, and creative staff, but in the world of AI start-ups, that doesn’t mean it couldn’t be cooking up industry-changing tech.

Netflix hasn’t revealed how much it paid, but we do know that all the assets, etc., and staff will now be a part of Netflix, and Affleck will remain as senior advisor.

The one specific thing we do know about is a patent.

The patent - US 12,511,904 B1

In December 2025, the United States Patent Office granted US Patent 12,511,904 B1, titled:

“Method, System, and Computer-Readable Medium for Training a Captioner Model to Generate Captions for Video Content by Analyzing and Recognizing Cinematic Elements.”

The inventor listed on the filing is Benjamin Geza Affleck-Boldt, and the assignee is InterPositive, LLC (i.e. the company Netflix has just acquired).

The patent describes a system that teaches an AI model to watch film footage and understand how the shot was filmed.

Most AI vision systems are trained to recognise objects or actions. They answer questions like:

Is there a dog in this image?

Is someone driving a car?

Is a person walking across the street?

InterPositive is focusing on something slightly different, namely, how the scene was actually shot.

The model learns to recognise cinematic features such as:

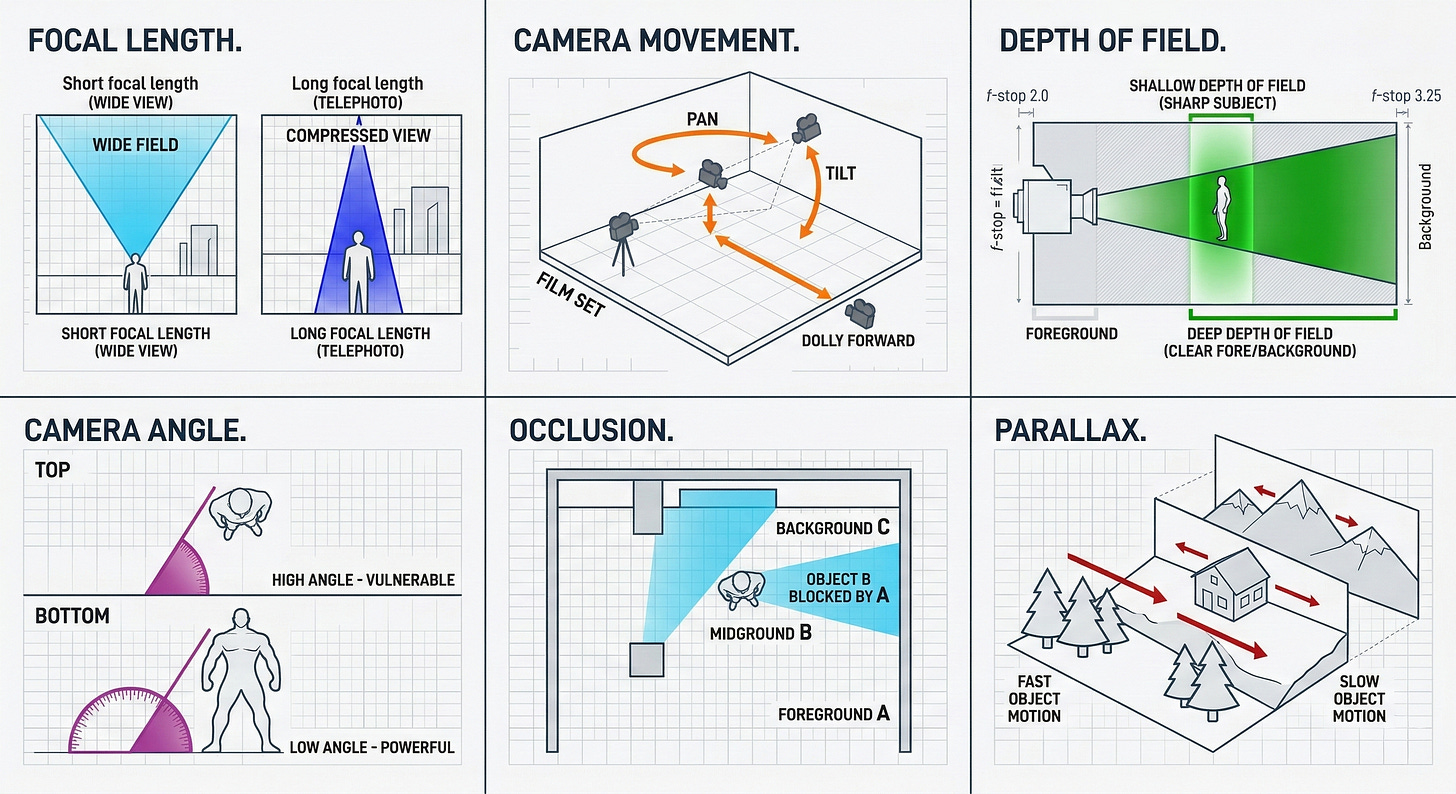

Focal length. This is which lens is being used and how it affects the image's perspective. Short focal lengths create wider views with more background visible, while longer focal lengths compress space and make subjects appear closer to the background.

Camera movement tracks how the camera changes position during a shot, such as panning, tilting, or moving forward on a dolly. These movements alter how the viewer experiences the scene and can signal emphasis or narrative focus.

Depth of field refers to how much of the image appears in focus at once. A shallow depth of field keeps the subject sharp while blurring the background, while a deep depth of field keeps both foreground and background clear.

Camera angle refers to the camera's position relative to the subject. For example, a high angle looks down on a subject, while a low angle looks up, often changing how powerful or vulnerable a character appears.

Occlusion occurs when one object partially blocks another from the camera’s view. This can reveal information about the spatial arrangement of objects in a scene.

Parallax describes how objects at different distances appear to move at different speeds as the camera moves. Foreground elements shift more quickly than distant backgrounds, helping convey depth in the image.

In other words, it attempts to convert video footage into cinematography metadata.

The way the model looks at filmed footage mirrors how filmmakers in the real world think about their shots. So InterPositive’s claim that they are looking to use AI to enhance current filmmaking rather than destroy it is borne out in this patent.

I doubt that all but the most ardent anti-AI filmmakers would have a problem with a model that looks at real footage and gives you helpful information about it (assuming the information is correct, of course!).

The model - How does it work?

Their process started with a large collection of video clips, each paired with some form of description or metadata about what is happening in the shot. The system extracts individual frames from these videos and converts them into a format that a machine-learning model can process.

Each frame is then labelled with information describing the cinematic elements present in the shot. This includes the features I already mentioned, such as focal length, camera movement, framing style, and distance from the subject.