How one-star and ten-star votes break IMDb's user score

A deep dive into IMDb’s 1-star and 10-star extremes, analysing time trends, genres, countries, demographics and the people who seem to benefit most from lots of generous voting.

Last week, two fandoms started fighting over a decimal point.

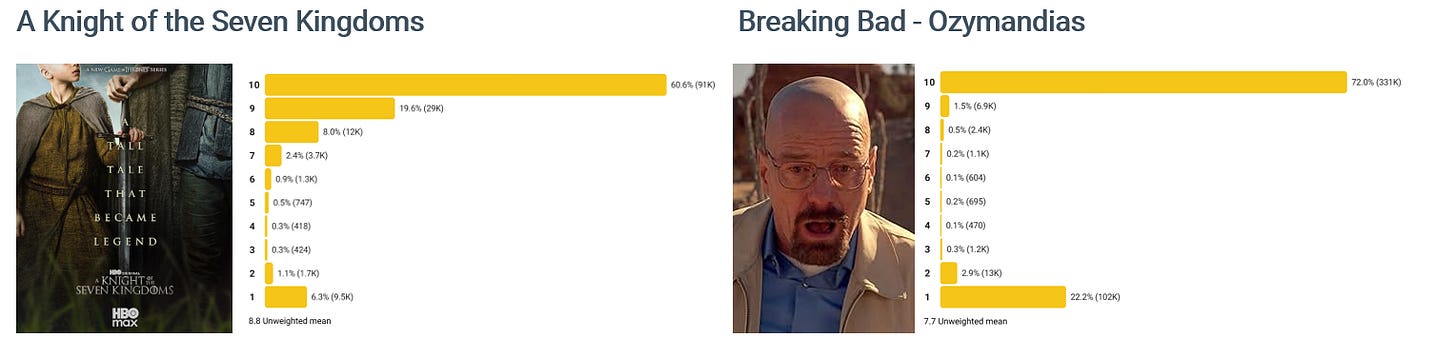

An episode of A Knight of the Seven Kingdoms briefly climbed into the same rarefied space as Breaking Bad’s “Ozymandias”. Within hours, users were mobilising. Some piled in with 10-star votes to defend the newcomer. Others countered with 1-star votes to protect an incumbent.

None of this is new. IMDb has long had to contend with coordinated vote-stuffing and defend against the claim that its official “IMDb Rating” is biased and/or broken.

But how much do one- and ten-star reviews actually affect the final IMDb score? I want to take you on a journey behind the numbers to provide context for the current (and future) arguments.

To do this, I looked at over 85 million votes cast on IMDb across 73,000 movies.

What is an “IMDb Rating” anyway?

On the surface, it is a single number between 1 and 10, rounded to a single decimal place. Most people use it as a quick, effective way to say whether a film is any good. For example:

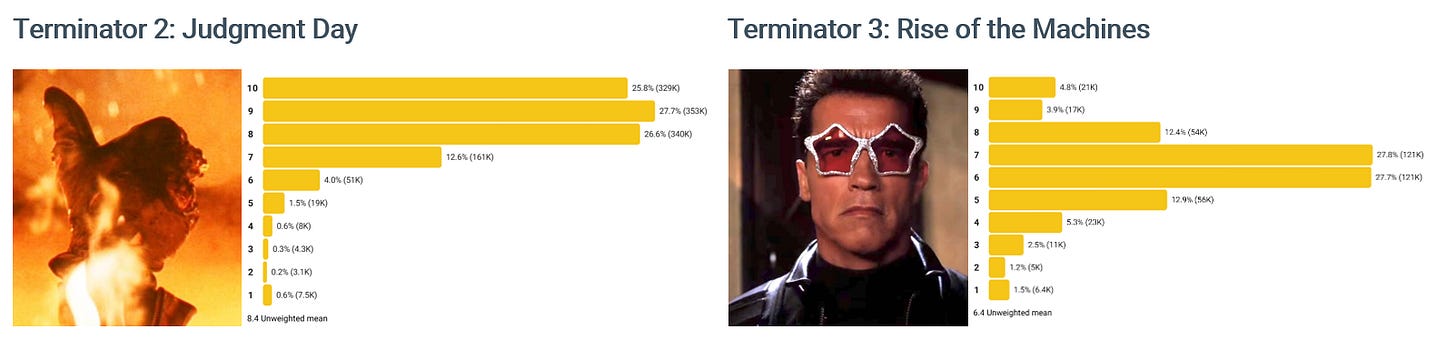

Terminator 2: Judgment Day has a score of 8.6 out of ten, putting it in the top 3.5% of movies on IMDb.

Terminator 3: Rise of the Machines has a score of 6.3, putting it in the bottom 52.2%.

We all like a single specific score. The number looks precise, it implies consensus, and is easy to quote. Also, it’s useful. A quick glance at a movie’s IMDb rating is often enough to nudge us towards or away from investing time in a movie.

And yet, as soon as you put even a modicum of scrutiny on this number, you realise that it is deeply flawed. Among the problems with it are:

It pretends we agree. A single number implies that there is an objectively ‘correct’ measure of a film’s quality, hiding any sense of polarisation or disagreement.

It ignores the extremes of love and hate. Extreme and intense feelings matter. We all have movies we will defend to our last breath, and others we couldn’t be paid to watch again. A single rating doesn’t reflect the levels of extreme love that 10 out of 10 votes reflect, not those scoring it just 1. (More on this in my piece from a few weeks ago, What makes people never want to rewatch a great film?).

What is it even measuring?! Some see the IMDb score as a signal of a film’s inherent artistic quality, as in “This is a good film”. It can also be used as a reflection of a film’s appeal, as in “This is a popular film”. Another reading could be of a movie that people want to see more of, as in “I support this kind of movie”.

It doesn’t tell us what we actually want to know. If you’re picking a movie to watch, there are so many factors that go into your choice that a single, averaged number from the wider public is close to useless. I would guess that the extent to which a film is entertaining would be greater than its artistic merit. Schindler’s List is the 6th-highest-rated film on IMDb at 9.0, but few would casually add it to their list when looking for a movie to pass the time. (See Which types of movies make money despite receiving bad reviews from critics?).

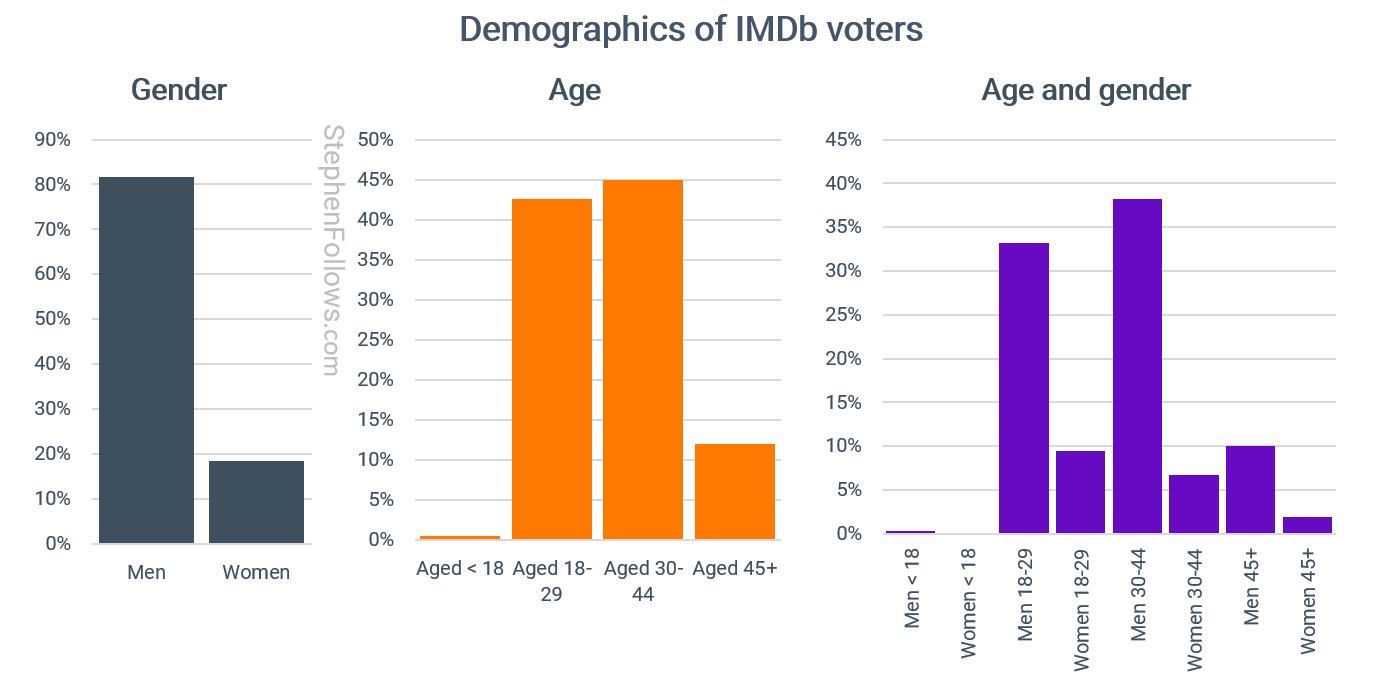

It ignores who is voting. People are not in a single homogeneous group, and so a single score ignores age, gender, location, cinephilia, etc. Worse than that, one group may dominate: when I last crunched the data, 81.7% of IMDb votes were cast by men, and 45.0% by people aged 30-44.

Early voting is not representative. People who see a movie early behave differently from those who see it late, but a single average cannot distinguish between them. This is especially important if the score is used to determine audience behaviour in the crucial opening week of a movie's release.

It can be gamed. Vote stuffing to increase or decrease a movie’s score is rife. More on that in a moment.

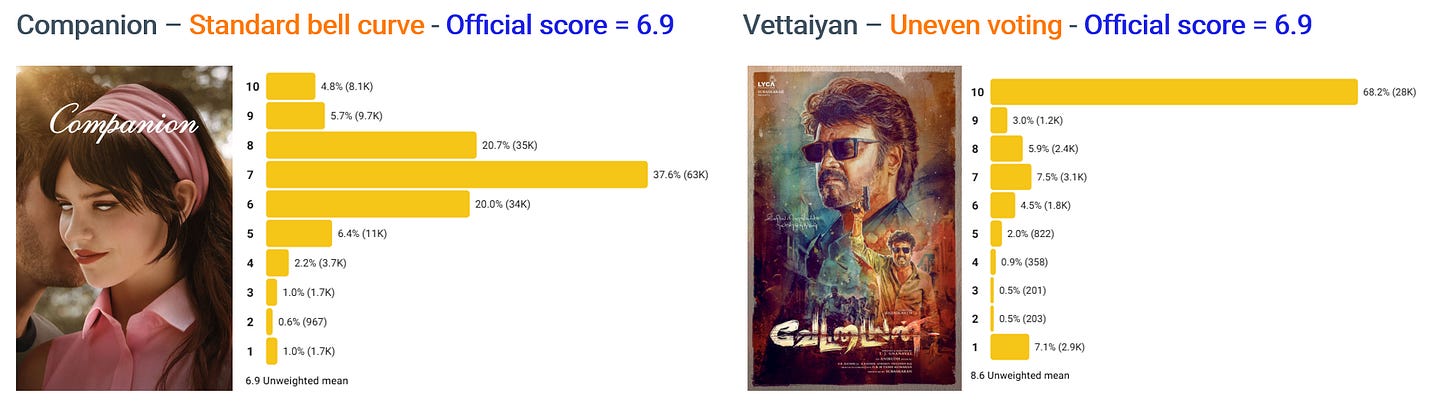

Both the films below (Companion and Vettaiyan) have an official IMDb score of 6.9, but show very different voting patterns.

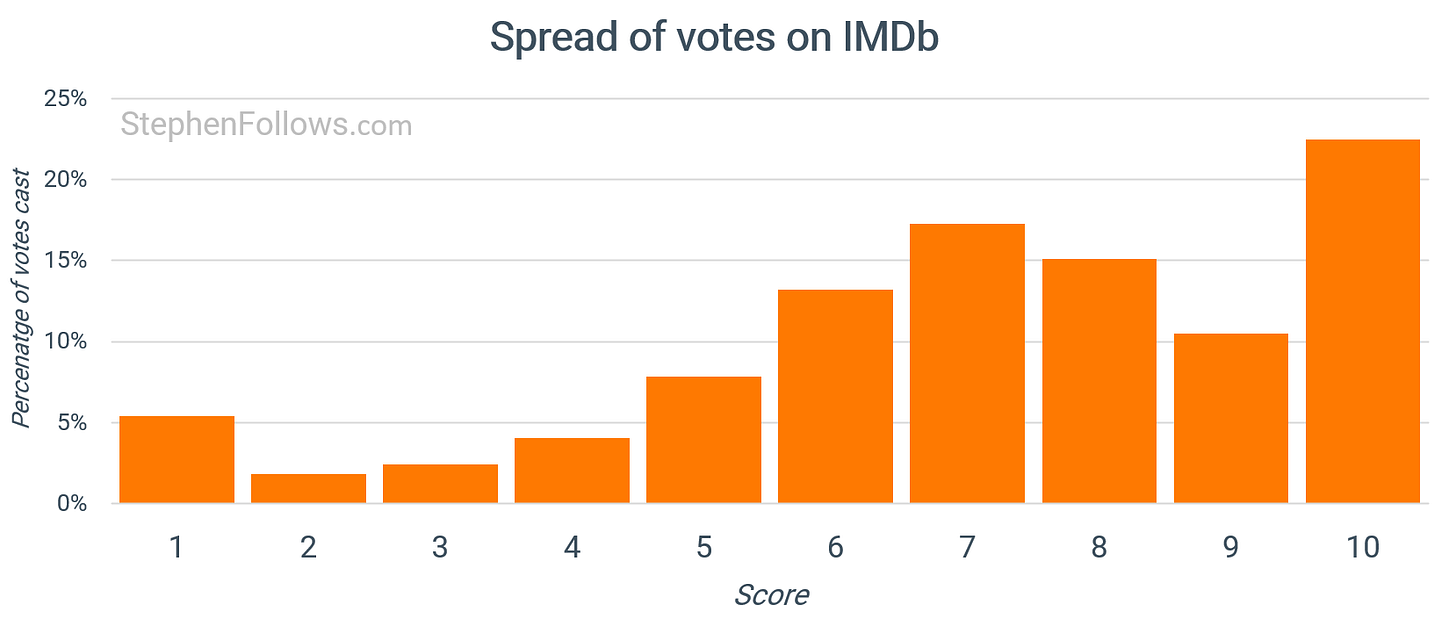

An uneven distribution of votes is not uncommon.

This is the world in which IMDb has operated when it’s calculating its final official IMDb Score. The raw data it’s dealing with (i.e., the votes cast) are frequently extreme, reflecting a desire to move the needle rather than passively reflecting the voter’s measured opinion.

There are so many films with voting patterns that simply cannot reflect what viewers think of the movie's quality.

How many IMDb scores are skewed?

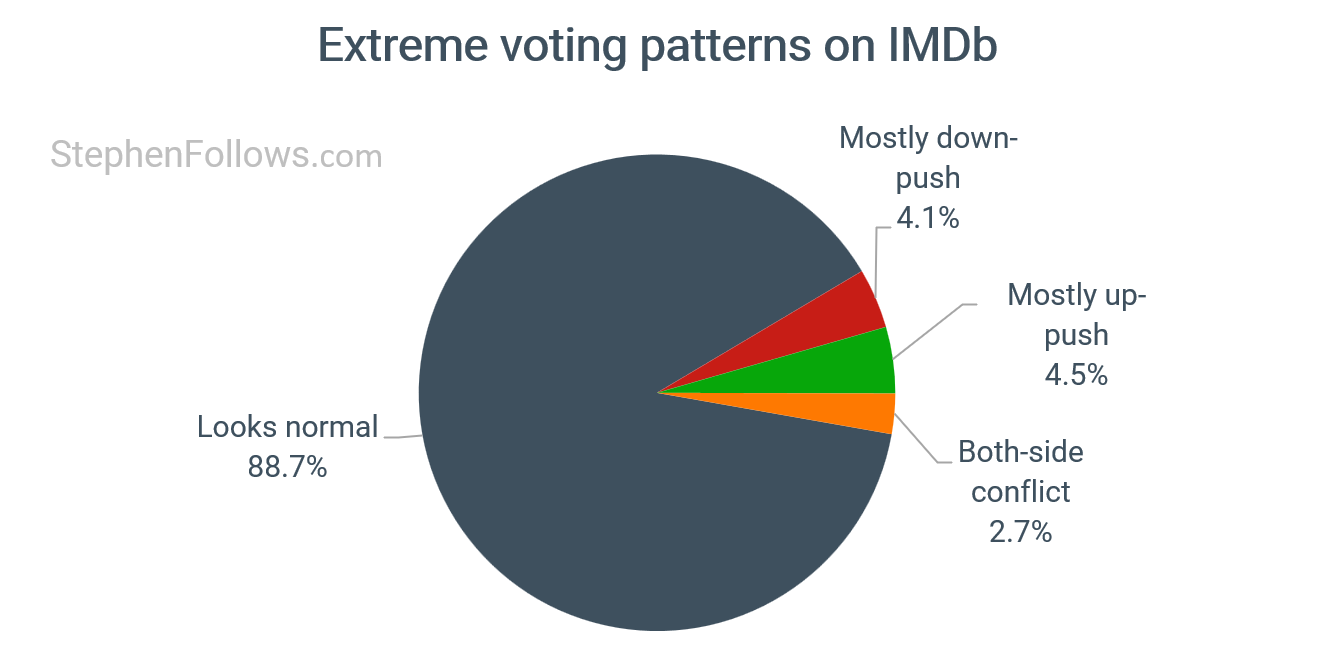

There are three ways in which a film could be affected by vote stuffing:

Push-down, where unusually heavy low-end voting (especially 1-star) is dragging down the average.

Push-up, where unusually heavy high-end voting (especially 10-star) is bringing up the average.

Collision voting, in which both ends are elevated at once (lots of 1s and lots of 10s), results in the score moving in either direction, depending on edge strength.

To get a sense of how many films experience these forces, I classified each film by comparing its 1-star and 10-star vote shares against what is normal for films with similar average scores in the same country.

If neither extreme is unusually high, it goes into normal

If only 1-star is unusually high, it is push-down.

If only 10-star is unusually high, it is push-up

And if both extremes are unusually high at the same time, it is classified as collision voting.

This reveals that over 10% of films on IMDb are experiencing extreme edge pressures.

Is it getting worse?

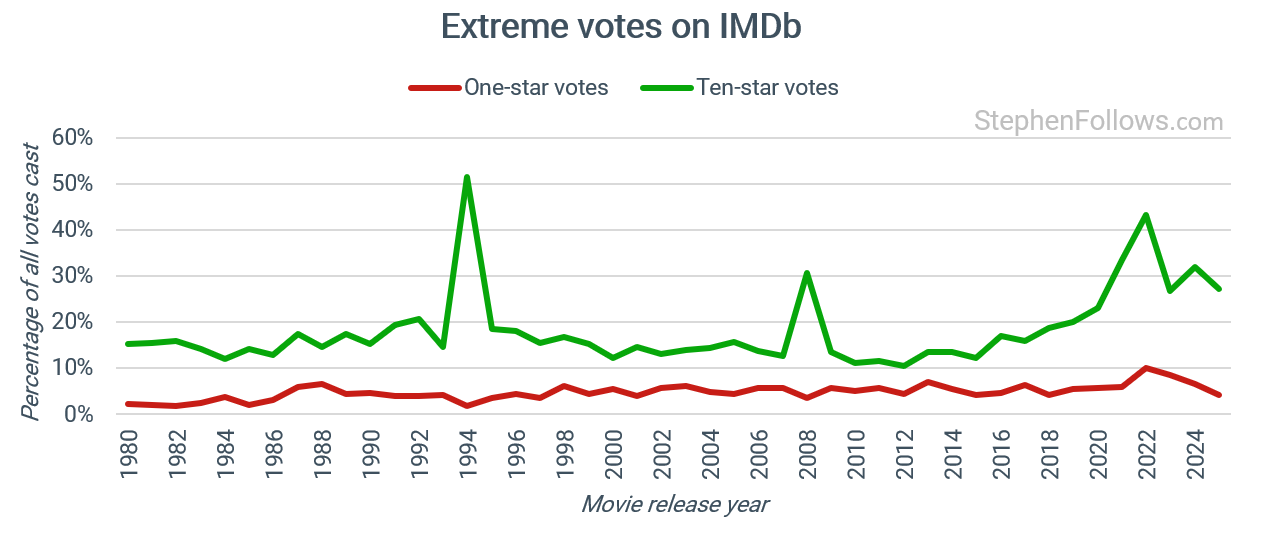

In a word, yes.

Below is a time series showing the rate of extreme voting behaviour. It reveals that since the early 2010s, the number of 10-star reviews has increased massively. One-star voters saw a slight increase, followed by a return to the longer-term average in the most recent years.

Eagle-eyed readers will have already spotted two anomalous years in the graph above. The number of ten-star votes in 1994 and 2008 is strangely high. And this neatly highlights another issue with movie ratings - outliers.

The spike in those two years is almost entirely down to just two movies - The Shawshank Redemption (1994) and The Dark Knight (2008).

54.9% of all the votes cast for The Shawshank Redemption were ten stars. Given that it’s also the site’s most rated film (3.2 million votes to date), this is enough to disrupt the whole year’s ratings. Similarly, 45.6% of The Dark Knight’s 3.1 million votes were full marks.

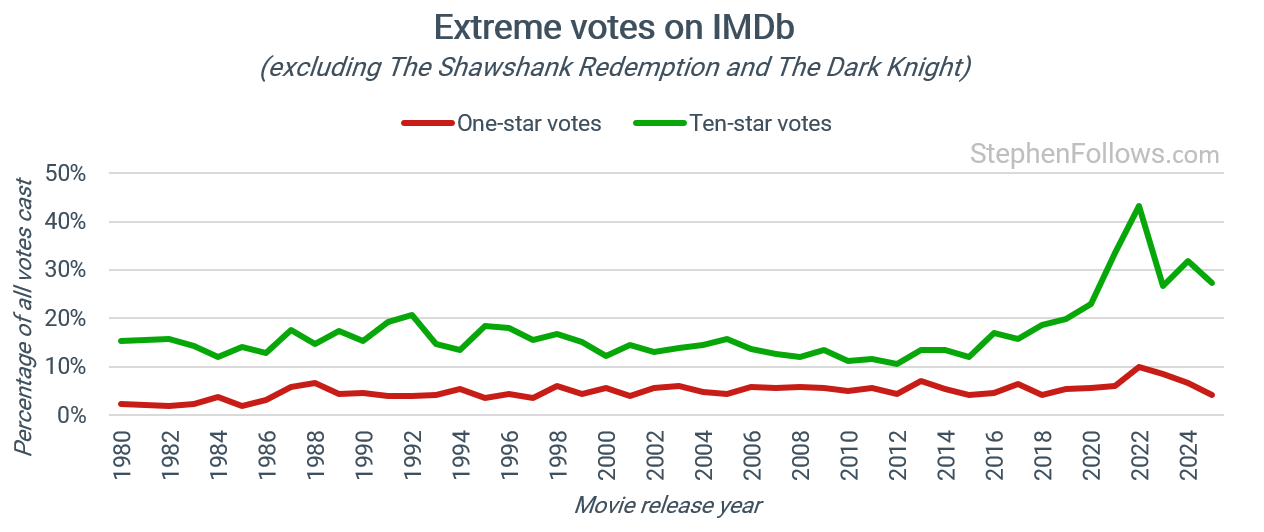

If we remove just those two titles, the chart reads a little clearer.

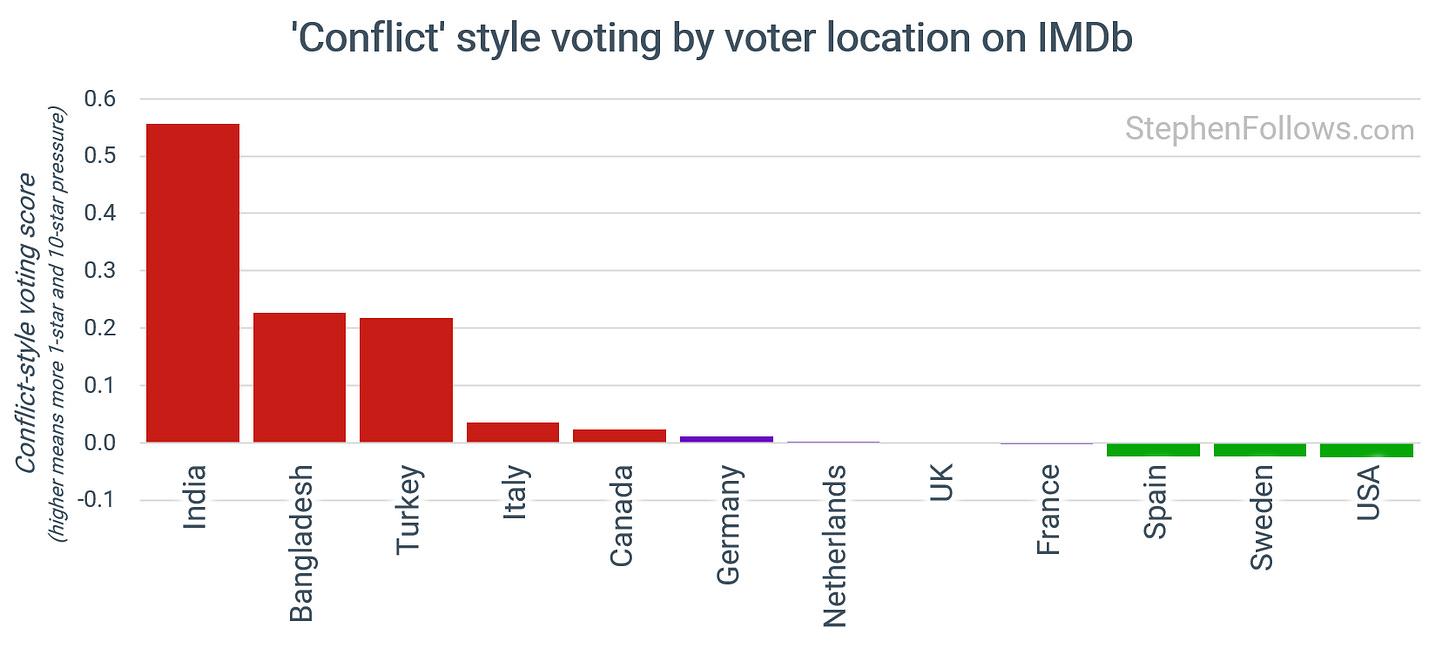

Where in the world is this happening?

These push-pull effects are not distributed equally.

IMDb now shows country-level vote breakdowns, showing the vote counts for the five highest-voting countries for each movie.

The differences between voters in each country are likely to be twofold:

Cultural differences around what it means to be a fan. In some countries, vote-stuffing may be seen as a proper way to support your favourite movies or star, while in others it may be frowned upon, no matter the fervour of your fandom.

Movies of national relevance. Cinema has always been political (no matter what Wim Wenders says), and that’s never clearer than when a film tells a story associated with a nation’s identity. These are more likely to whip up pride and anger, leading to strategic voting.

Fans in India, Bangladesh, and Turkey are most likely to leave one- or ten-star reviews online. Spanish, Swedish, and American fans are the least (from the countries with enough voting data).

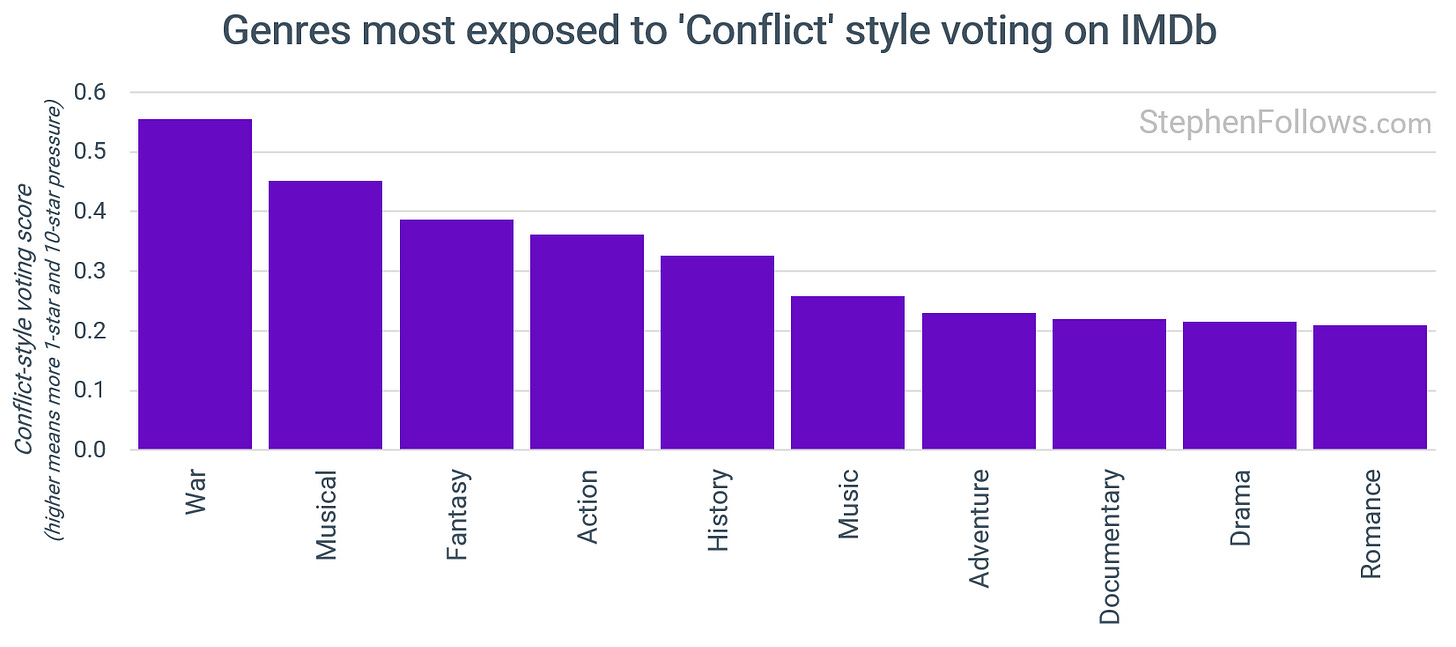

What types of films attract the most abnormal voting?

Genres attract different kinds of audience behaviour.

Perhaps appropriately, war films receive the most abnormal voting behaviours. I put this down to their high propensity to be stories of politics, identity and national history.

It seems reasonable to assume that most war films make one side out to be more virtuous than another, and so the number of people who feel offended is likely to be higher than the number of people who feel offended by romance films. (I guess one advantage of having your victim be uncaring, shitty boyfriends over a particular national group is that the very people you are slating don’t know or care that it’s them being attacked).

This highlights a point I made earlier, that a 7.2 average can hide wildly different realities. For a rom-com, it might mean most people are genuinely settled around a 7. For a war film, the same 7.2 could reflect a split crowd, with a chunk handing out 10s and a larger chunk voting it down to 1.

Who’s doing this?

So far, I have loosely referred to the people providing the edge votes as “fans”, the truth is likely to be messier than that. They could include:

Supporters of a movie, star, story, or theme. These are people who want their interests to be better regarded and/or to receive the recognition they so obviously deserve.

Haters of people, ideas, or trends. Lots of movies get systematically downvoted because they take a position on politics, race, or religion. Or simply because it’s something young women like.

Representatives of the people involved. I’m sure there must be people who represent a tricky client and have twigged that when their client’s projects' IMDb scores go up, the client becomes more tolerable. Plus, a growing recognition for their work may also lead to commanding higher fees or better terms in future negotiations.

The stars themselves. Heavens forbid that I suggest, for even a moment, that actors may care one jot about what people think of them or their movies. Equally, I know that all filmmakers never read reviews, as they are constantly telling me so.

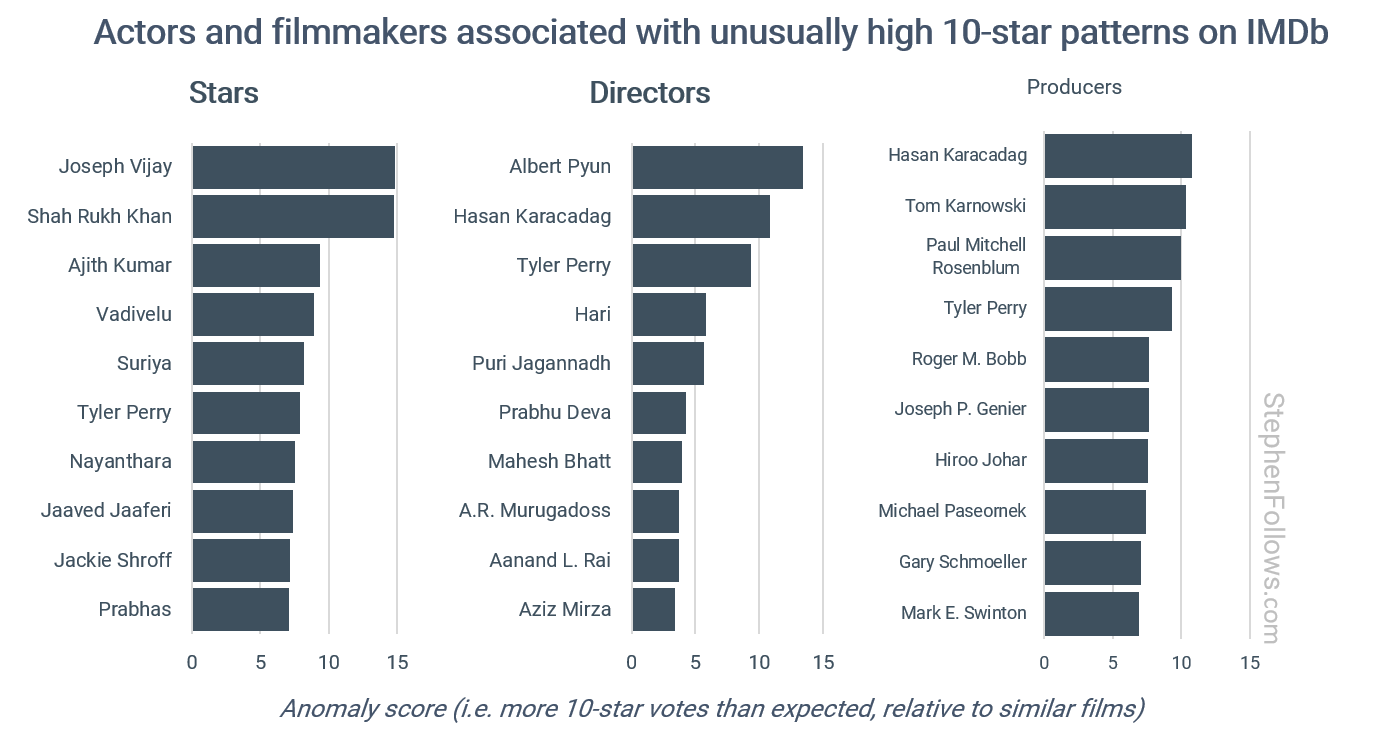

Given the large number of people who may be motivated to affect an IMDb score, we cannot say that just because a certain individual repeatedly benefits from vote stuffing, they are the cause of it. It’s very possible they don’t even know it’s happening.

So as a public service to those unwitting winners, I looked into which stars and filmmakers seem to repeatedly have their projects targeted for absurdly large numbers of 10-star reviews, when compared to films with similar overall scores and vote volumes.

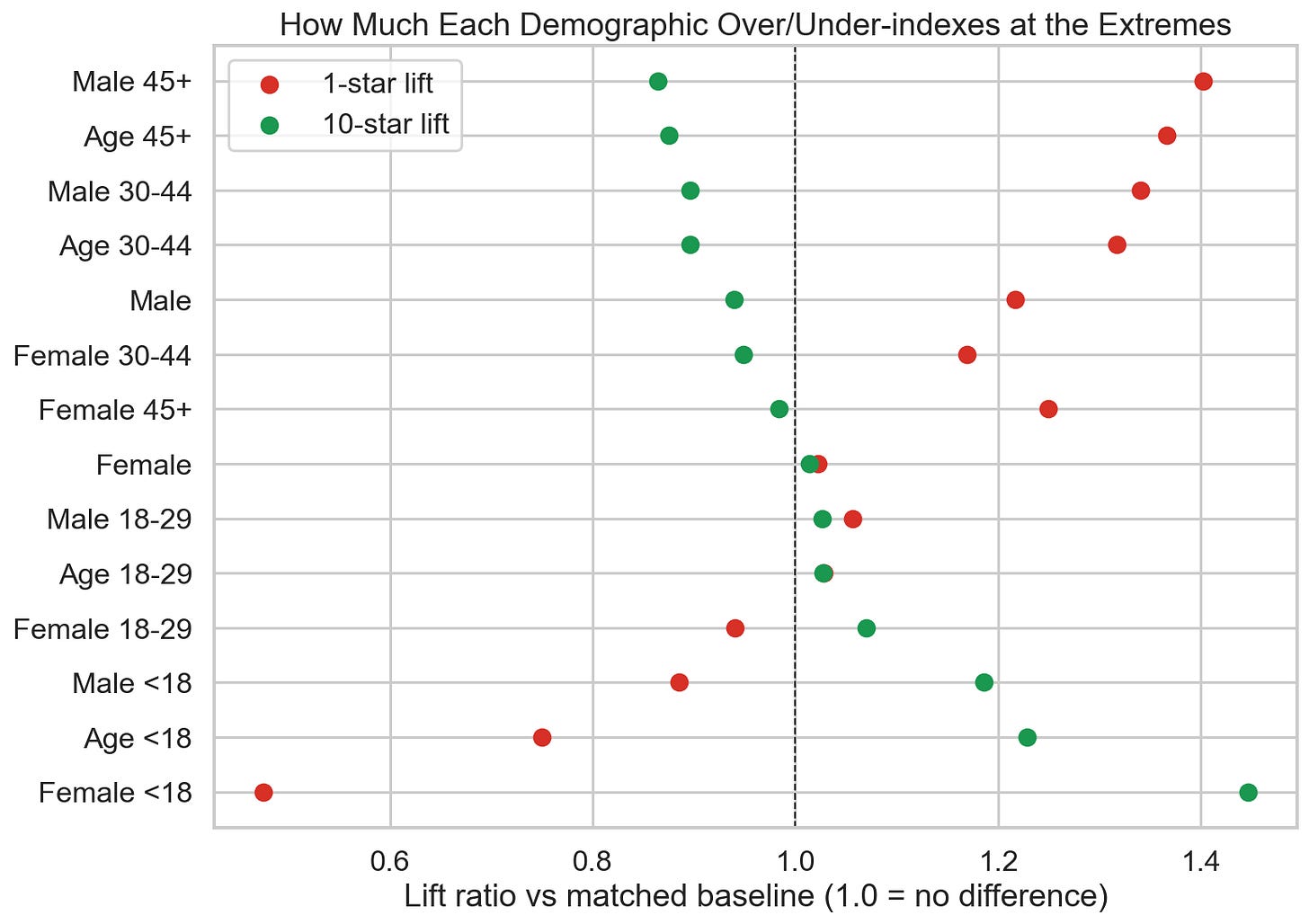

Is this a problem with kids today?

The demographic data on IMDb used to be somewhat limited, and today it’s nonexistent. But using older data, and some clever maths, we can estimate who’s behind the extreme behaviour.

I mapped each demographic group’s average rating onto the actual vote shape of the films they rated. From that, I could infer how much more or less likely that group is to use 1-star or 10-star relative to a matched baseline.

The chart below shows the results, albeit in a slightly unintuitive format. Here’s how to read it:

Each row is a demographic group, using IMDb’s classification (note that in this case, ‘old’ means everyone between 45 years old and death).

The red dots denote that group’s relative tendency toward 1-star voting and the green dots show their relative tendency toward 10-star voting.

The vertical dashed line at 1.0 means “no difference from baseline”.

So if a dot sits to the right of 1.0 then that group uses that extreme more than expected. If a dot sits to the left of 1.0 then they use that kind of extreme voting less than expected.

The farther away from 1.0, the stronger the tilt.

What we discover is that young people love things too much, and old people hate things too much.

So how does IMDb respond to this?

I hope you have grasped by now just how hard IMDb’s job is. They are being bombarded by millions of targeted votes, designed to maintain a score that many people take seriously and which is a key part of IMDb’s brand and credibility. I do not envy them nor the task they face.

IMDb does not have a creative agenda. It would not work to adjust scores on a case-by-case basis, partly because they are trying to be apolitical, but also because dealing with the sheer number of people who believe they are suffering from “unfair” voting would be unworkable.

They need a single system that applies equally to all films.

(Well, the voting on This Is Spinal Tap claims to go up to eleven, but (a) that’s the only case I know of; (b) it doesn’t really, it just says that on the film’s homepage; and (c) to be fair, that’s a pretty good joke).

To tackle this, the official IMDb rating is not an average of all the votes. Instead, they use a secret algorithm that seeks to account for extreme voting while acknowledging that some movies do actually deserve large numbers of one- or ten-star votes, as we've seen with the Batmen of Justice League and The Dark Knight, respectively.

How does this algorithm work? It’s a proprietary secret. And it should be, as sharing the formula can only help those looking to manipulate the system.

My best guess is that it uses;

The arithmetic mean from the full histogram,

The total vote count,

Signals about the vote distribution shape, especially extreme buckets,

Interactions between 1-star and 10-star pressure, and

Possibly trust-weighting based on user data, such as account age, voting history, behavioural consistency, detection of coordinated bursts and other anti-bot measures.

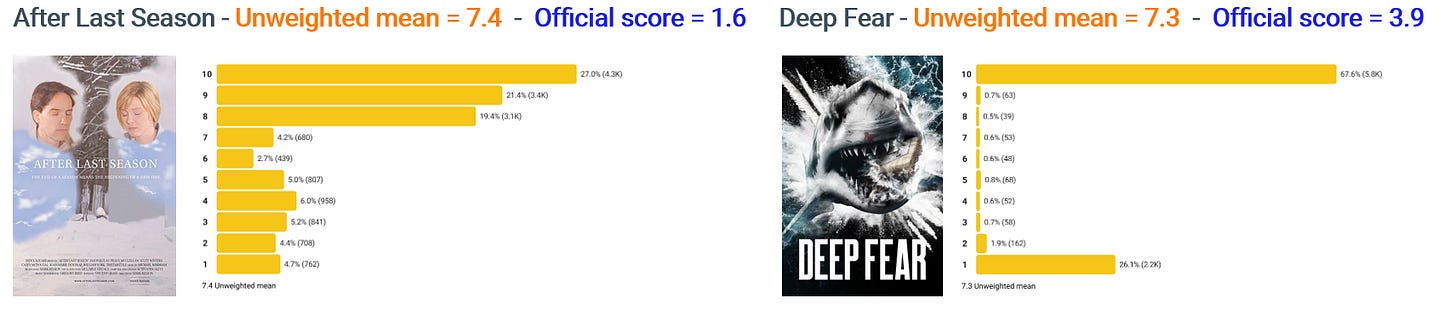

Below are a couple of extreme examples, both with an unweighted mean of around 7.3, both of which have much lower scores than their mean, but with different IMDb scores due to different voting patterns.

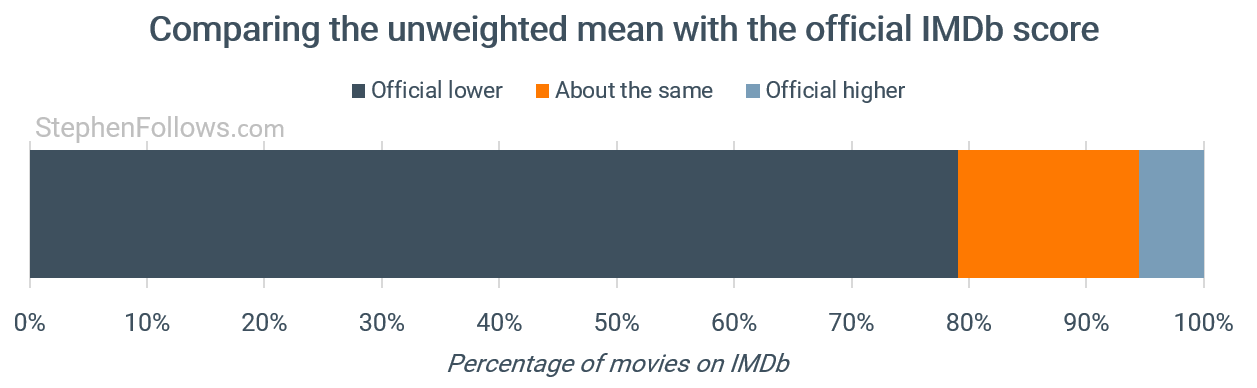

In almost four out of five movies, the official IMDb score is lower than the arithmetic mean. I presume this is because every project has at least some people motivated to stuff 10-star votes - i.e. the cast and crew!

The Goat Life is one of the rare films where the official score (7.1) is higher than the unweighted mean (5.7).

IMDb compared with Letterboxd

IMDb is also operating in a more combative environment than other sites. Because it's the biggest and ‘site of record’ for many people, it attracts the most attention from those wanting to make a point, promote a film they like, or punish a film or person they don’t.

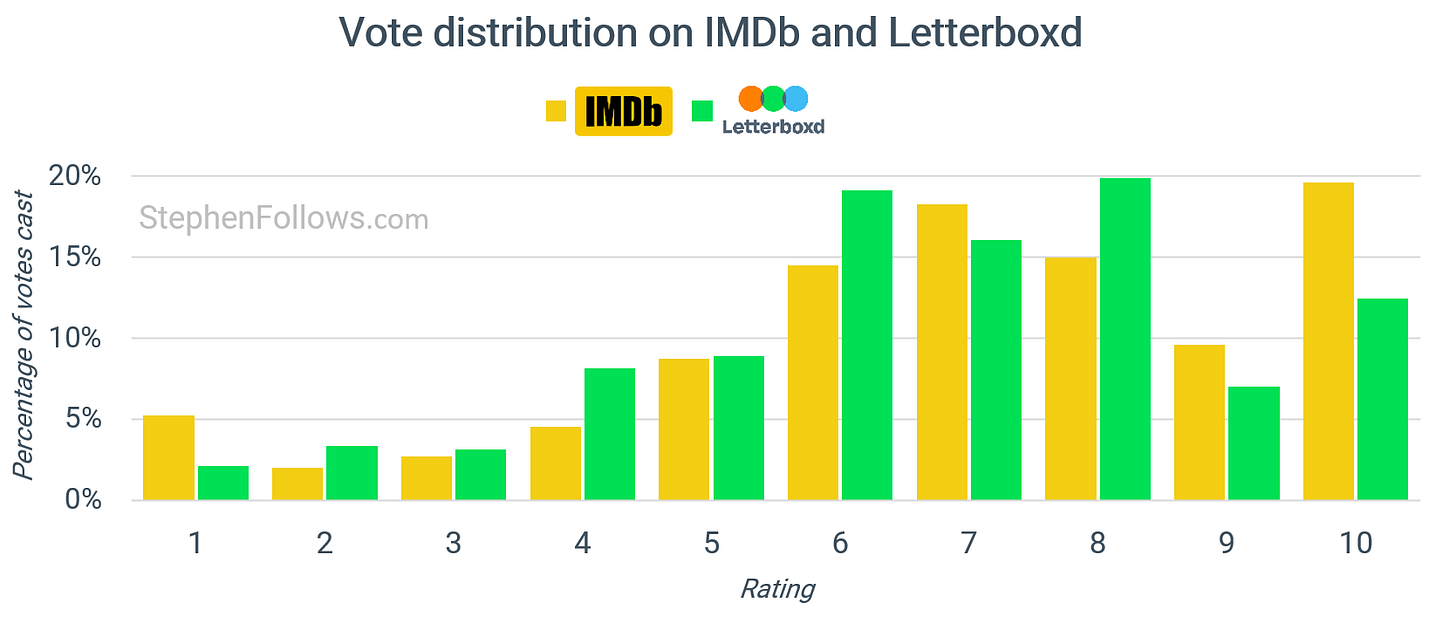

A quick check of comparable scores on the Cinephile database Letterboxd shows that IMDb has roughly twice as many extreme votes.

Overall, the two sites agree quite a bit, with a correlation of 0.80 for films with over 1,000 votes on both platforms.

In defence of the IMDb user score

I’ll be honest and say I think the IMDb system does a pretty good job, given the vast influx of messy data and the need for a single universal solution.

Here’s the real truth - this is not a problem with IMDb. It’s a problem with us. By us, I mean both:

The people who vote. If the invention of the internet has proved anything, it’s that when people can hide behind a keyboard, they act like trash. In this article, I have been rude about actors, agents, boyfriends, Batmen, and suggested that the next life milestone a 45-year-old has to look forward to is death. I wouldn’t do any of this in person. When you add to the mix that people feel very passionate about movies they love, this leads to a lot of mean behaviour.

The people who use IMDb score. As I said at the top, it is foolish to think that any score can flatten all the factors we've seen and accurately reflect a piece of art with a single number. IMDb does its best to provide a quick guide, but at the end of the day, we only have ourselves to blame if we think the score matters, and if we are offended by it.

The IMDb user score is not wrong - we're just using it wrong.

Epilogue

It’s easy for this to become purely about maths, but there is a human side to this topic.

When a movie is targeted for mass negative commentary and voting, real people will be hurt. The negative effects fall on directors, producers, cast and crew. It shapes perception, affects confidence, influences press coverage, and becomes part of the public record.

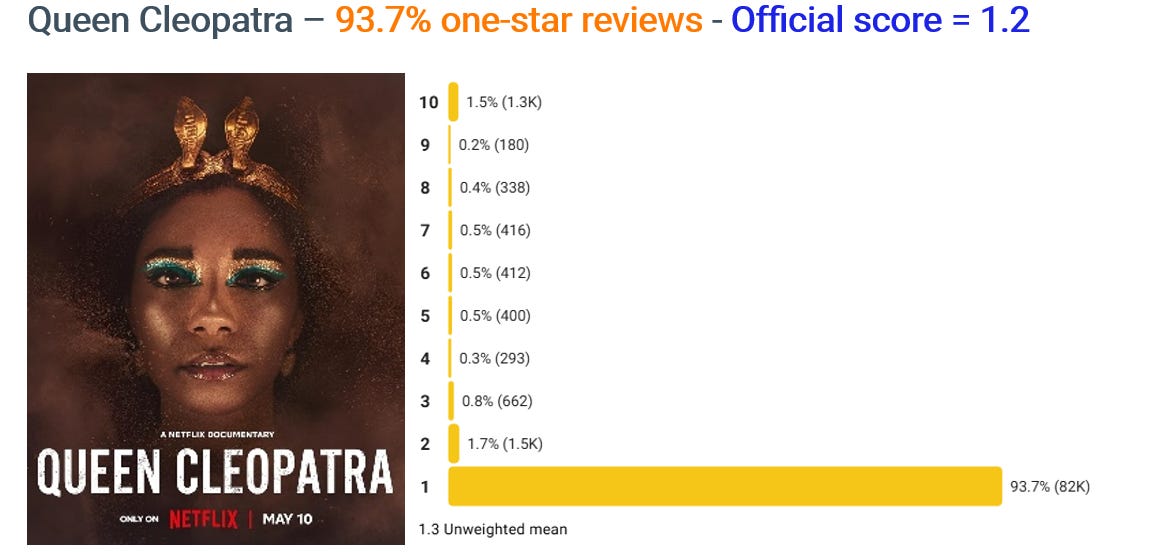

One such person is the Iranian-born British artist, director, and screenwriter Tina Gharavi. Gharavi is the director of the four-part miniseries Queen Cleopatra starring Jada Pinkett Smith, Adele James, and Craig Russell.

The series currently has an implausibly low IMDb score of 1.2 out of 10. One-star reviews account for 93.7% of the 88,000 votes cast. (If we drill down to just voters in Egypt, then it’s even worse at over 97% one-star votes).

That’s half the rating the classic stinker Battlefield Earth got from roughly the same number of votes cast. It’s also at odds with the critics' view, with the project scoring 45 out of 100 on Metacritic.

I spoke to Tina about this, and she explained the effect this has had:

The reviews and ratings were part of a coordinated and politically motivated hate campaign, largely in response to the depiction of a Black Cleopatra. It was never truly about the quality of the production. I welcome rigorous debate about history, representation, and creative interpretation - that’s part of the job. But this did not feel like debate. It felt like a personal and racialised attack.

Ironically, the campaign generated enormous publicity and drove viewership. The series reached number four globally on Netflix and number one in several territories - probably not the outcome its organisers intended. What is regrettable, however, is that malicious and racially motivated voting can remain embedded on major platforms long after the initial outrage cycle has passed.

It’s not hard to imagine how Tina feels. She is experiencing a sustained and targeted attack on her and her artistic work, from people who are unlikely to have watched it.

When it first happened, Tina reached out to IMDb to note that the voting was anomalous and asked whether they agreed. IMDb’s reply included the following:

Please rest assured that the rating for the titles in your filmography is correct, as generated using the same method employed to calculate the rating for all titles listed on IMDb.com, which includes numerous safeguards to detect and minimize the effects of attempts to skew the results. Please also note that some of those titles have received a very small number of votes, which makes them susceptible to a lot of variability.

The hard thing to acknowledge here is that IMDb isn’t wrong. Assuming they are successfully removing suspicious votes from bots, the histogram does reflect how 88,000 people voted.

One could argue that they are platforming hateful views, as expressed through votes, but that’s not the same as being at fault.

All ratings are inherently subjective, and we can’t say that one subjective reason is more legitimate than another. Both because that’s the nature of subjective opinions, and because we have no way of knowing what caused them to vote that way.

But where does that leave people like Tina?

A few years on, I’ve learned to process it differently - but that doesn’t mean it leaves no mark. When a project is reduced to a number that has clearly been distorted, it enters the public record in a way that can affect perception, opportunity, and confidence - not just for me, but for the hundreds of people who worked on it.

I understand the philosophical argument that all votes are subjective. But when tens of thousands of one-star votes appear before most people have even had the chance to watch the work, it’s hard to see that as organic audience response.

What concerns me most is the precedent it sets - particularly for work that challenges dominant narratives or centres historically marginalised perspectives. If coordinated outrage can permanently brand a project with an artificially low score, that creates a chilling effect. Artists should be accountable for their work, but platforms also have a responsibility to examine how their systems can be weaponised.

Today’s research has reminded me that so often the industry reduces things to data and forgets that there are real people at the sharp end of these debates.

I wanted to leave the final word to Tina:

When a platform allows coordinated, bad-faith campaigns to permanently distort a work’s public record under the banner of “neutrality,” it is no longer a passive host of opinion but an active amplifier of harm - and with that amplification comes responsibility.

Notes

The research today focuses on movies, not TV or other types of content.

All the demographic data was up to the end of 2015. It's been a while since I last looked at the age and gender of IMDb voters, and since then, IMDb has stopped providing that data, instead showing combined scores split by the top five countries for each movie. It’s totally possible that the demographics of IMDb’s user base has radically changed over the past decade, although that feels unlikely.

When referring to years in the charts, this relates to the movie’s release, not the year the votes were cast. So it’s reasonable to assume that most of the votes cast for movies since around 2000 were within the first year or so of release, but certainly for anything before around 1995, it’s voting with the knowledge of history on our side.

To define the “push-down / push-up / conflict” I measured how unusual a movie’s one-star share and ten-star shares were relative to peers in the same country and similar score band. This creates standardised anomaly scores (z-scores). Using a threshold of z >= 2:

Push-down = unusually high one-star, but not ten-star

Push-up = unusually high ten-star, but not one-star

Conflict = both unusually high

Normal = neither unusually high

Part of the reason I was able to whip up a long article on short notice is that I have been studying ratings, including those on IMDb, in the background for a long time. Ten years ago, I teamed up with a professor of Astrostatistics to identify the creators and actors most associated with projects that received an unusually high number of up-push votes (as I did again above).

We created a list of 100 top candidates, and I emailed them all, offering them a chance to chat off the record. Perhaps to no one’s surprise, I got exactly zero replies.

Great article. One of the biggest blind spots, though, is director gender. Many of us who are women directors see our films systematically pushed down by low ratings and logline-based “reviews” that appear before anyone could have watched the work. This happens consistently, and often affects queer projects as well.

Would love to see your analysis applied to that bias. We believe IMDb is aware of it but has chosen not to address it.

If I like this post, am I gaming the Substack algorithm? Perhaps. Now you get to fax me an orange juice!