Do film critics and film audiences agree?

I’ve always wondered if film critics are biased towards certain types of movies, so I thought I would take a look at what the data can tell us. I looked that the 100 highest grossing films from each of the past 20 years, which gave me 2,000 films to study. I then cross referenced data from IMDb’s user votes, Rotten Tomatoes’ audience percentage, Metacritic’s Metascore and Rotten Tomatoes’ Tomatometer. In summary…

Film critics and audiences do rate films differently

Film critics are tougher and give a broader range of scores

Film critics and audiences disagree most on horror, romantic comedies and thrillers.

Audiences and film critics gave the highest ratings to films that were distributed by Focus Features.

Film critics really do not like films distributed by Screen Gems

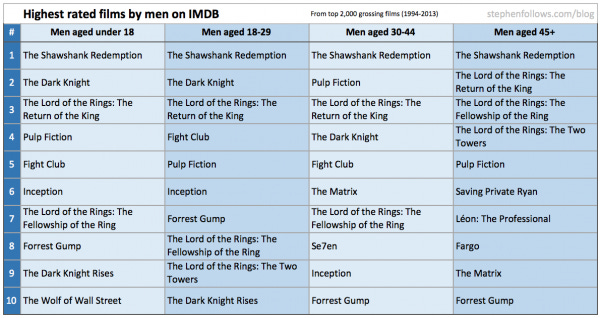

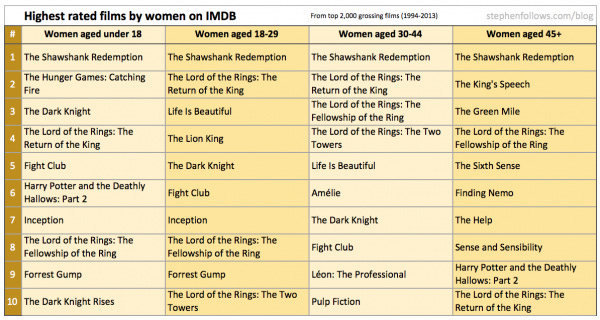

The Shawshank Redemption is the highest rated film in every audience demographic

Film critics rated 124 other films higher than The Shawshank Redemption

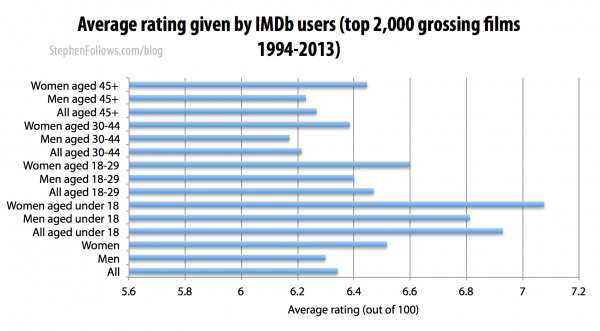

Female audience members tend to give films higher scores then male audiences

Older audience members give films much lower ratings than younger audiences

Rotten Tomatoes seems to have a higher proportion of women voting than IMDb’s 1 in 5 ratio of women to men.

IMDb seems to be staffed by men aged between 18 to 29 who dislike Black Comedies and love the 1995 classic ‘Showgirls’.

Do film critics and audiences rate in a similar way?

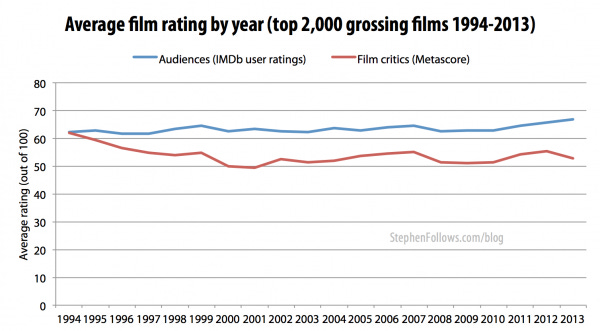

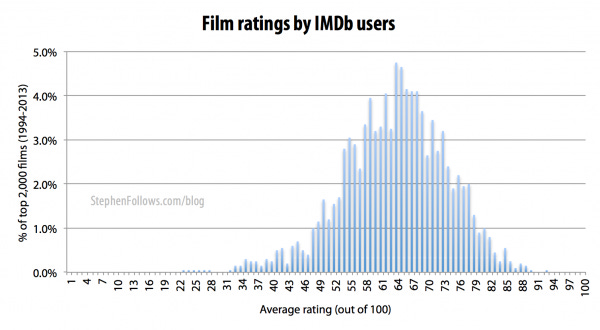

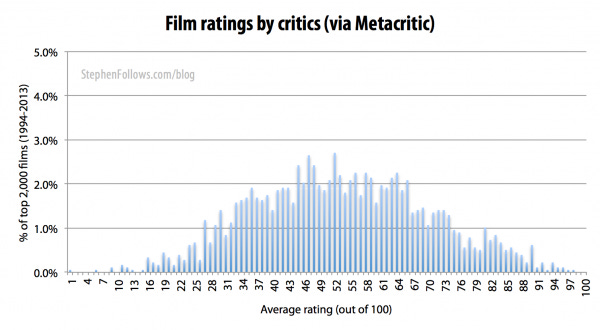

No. Film critics’ and audiences’ voting patterns differ in two significant ways. Firstly, critics give harsher judgements than audience members. Across all films in my sample, film critics rated films an average of 10 points lower than audiences.

Secondly, film critics also give a wider spread of ratings than audiences. On IMDb, half of all films were rated between 5.7 and 7.0 (out of 10) whereas half of all films on Metacritic were rated between 41 and 65 (out of 100).

This pattern was repeated on Rotten Tomatoes. Unlike IMDb and Metacritic, Rotten Tomatoes measures the number of people / critics who give the film a positive review. Half of all films received an audience rating of between 47% and 76%, compared with 28% and 73% by film critics.

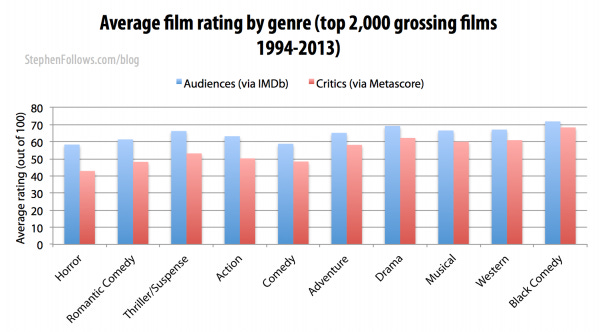

Which genres divide film critics and audiences?

The difference between the ratings given by film critics and audiences is most pronounced within genres that are often seen as populist ‘popcorn’ fare, such as horror, romantic comedy and thrillers. Both film critics and audiences give the highest ratings to black comedies and dramas.

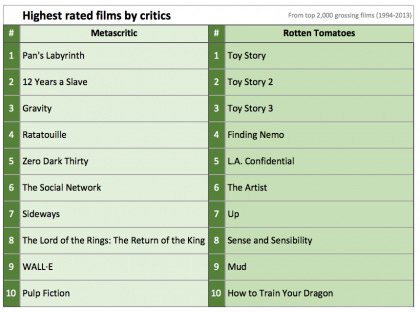

Do audiences and film critics share the same favourite films?

No. All segments of audiences put The Shawshank Redemption as their top film but it doesn’t even make it into the film critics’ top ten (it’s at #125 out of the 2,000 films I investigated).

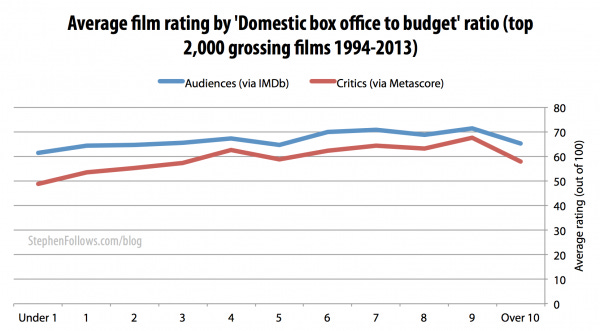

Do the most financially successful films get the highest ratings?

Yes. I charted film ratings against the ratio of North American Box Office gross divided by the production budget (as I feel this gives a fairer representation of a film’s performance than just the raw amount of money it grossed).

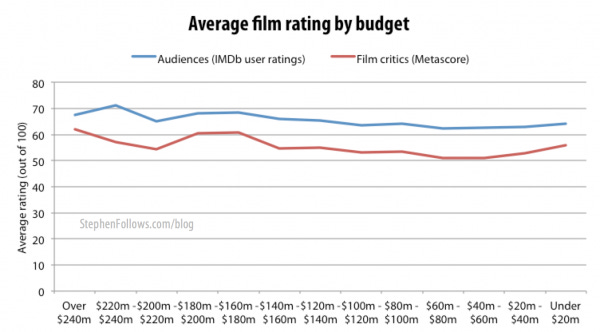

Do bigger budgets mean higher ratings?

Yes, both audiences and film critics prefer films with bigger budgets.

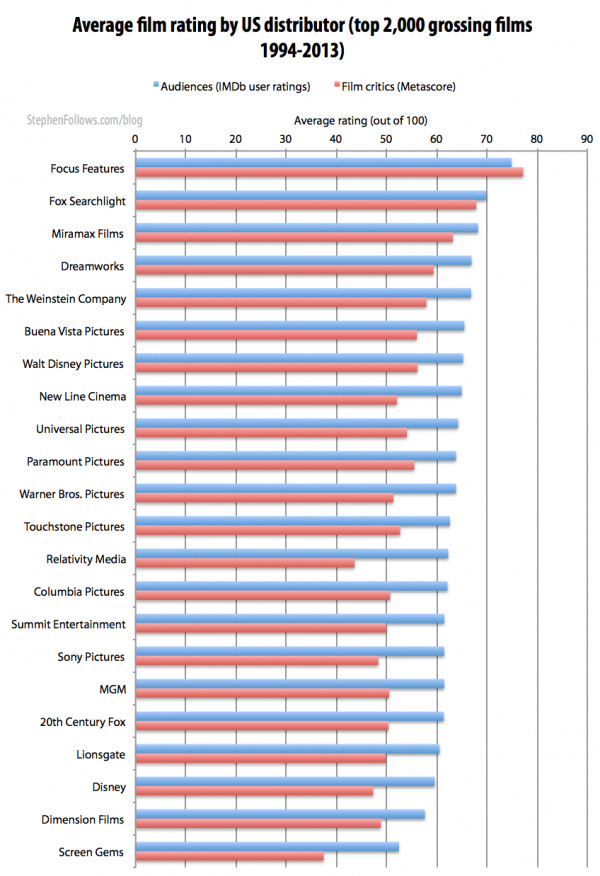

Do film critics and audiences agree which companies distribute the ‘best’ films?

Yes. Films distributed by certain companies consistently get the best reviews. This is not hugely surprising as we have already seen how film critics favour certain types of films and distribution companies tend to focus on certain types of films. Looking at company credits in all four scoring systems, Focus Features took the top spot, with Fox Searchlight second and Miramax coming in a close third.

Do critics and audiences agree which companies distribute the ‘worst’ films?

Yes, although there is less consensus than for the ‘best’ films. Films distributed by Screen Gems received the lowest scores on IMDb, Metacritic and the Tomatometer (they were second worst for audiences on Rotten Tomatoes).

Which distributors divide audiences and film critics?

Although the league tables of companies listed above are pretty similar, audiences and critics disagree about how good the ‘best’ are and how bad the ‘worst’ are. Film critics absolutely love films from Focus Features, to the extent that Focus is the only company whose films score higher with critics than with audiences.

Films distributed by Screen Gems, Relativity Media and New Line Cinema tend to get much poorer scores from critics than they do from audiences. Films by Relativity Media average 62/100 from audiences on IMDb whereas film critics only give them 44/100 on average.

Similarly, on Rotten Tomatoes, films by Screen Gems received positive reviews from 54% of audiences and only 28% of film critics.

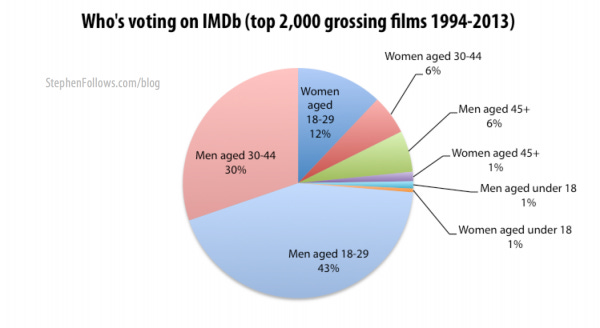

Who is voting on IMDb?

Across my 2,000 films, I tracked over 174 million votes. Yup, that’s a lot! However, those votes are not evenly spread. For starters 80% of votes were cast by men. Also, 54% of all votes are from people between the ages of 18 and 29.

Rotten Tomatoes appears to be better than IMDb at representing women

Rotten Tomatoes does not offer a split by gender. However, by comparing the gender breakdown on IMDb votes we can speculate that they have a more equal gender split than IMDb’s figure of 80% of votes coming from men. My deduction is due mostly to there being many female-skewed films in the Rotten Tomatoes audience top 20, including Life Is Beautiful, The Pianist and Amelie (see earlier in this article for female Top 10 films).

Who works at IMDb?

In the breakdown of user votes on IMDb, they mention votes by IMDb staff. I wouldn’t worry about IMDb staff votes skewing the overall score as they accounted for only 0.01% of the total votes cast. But this let me have a quick bit of fun looking at what this information reveals about the kinds of people who work at IMDb.

The voting patterns indicate that IMDb staff are…

Mostly male, aged between 18 and 29 years old

Enjoy horror films and dislike Black Comedies more than most IMDb users

They drink the Hollywood cool-aid, as shown by their disproportionate love of bigger budgets and movie spin offs.

They liked Showgirls a lot more than the average user

If anyone from IMDb is reading this, please can you do a quick straw poll in the office and let me know how far off I am? First one who does gets a limited edition Blu-Ray of Showgirls (as a back-up for the one you already own).

Methodology - The scoring systems I studied

I used four scoring systems to get a sense of how much “the public” and “the film critics” liked each film. In the blue corner, representing “The Public” we have…

IMDb user rating (out of 10) - IMDb users rate films out of 10 (the one exception being This Is Spinal Tap, where the rating is out of 11). IMDb displays the weighted average score at the top of each page, and provides a more detailed breakdown on the ‘User Ratings’ page. This outlines in detail how different groups rated that film, including by gender, age group and IMDb staff members.

Rotten Tomatoes audience score (a percentage) - The percentage of users who gave the film a positive score (at least 3.5 out of 5) on Rotten Tomatoes and Flixster websites.

And in the red corner, defending the honour of film critics everywhere, are…

Metacritic’s Metascore (out of 100) - Metacritic aggregates the scores given by a number of selected film critics and provides a weighted average. There is a breakdown of their methodology here.

Rotten Tomatoes’ Tomatometer - (a percentage) - The percentage of selected film critics who gave the film a positive review.

Comparing the different scoring systems

Both of the Rotten Tomatoes scoring systems look at the percentage of people who gave it a positive review, whereas IMDb and Metacritic are more interested in weighted averages of all the actual scores. This led me to wonder: are the ‘public’ and two ‘critics’ systems interchangeable? No. On the surface they seem similar but in the details they differ wildly.

Audience: IMDb versus Rotten Tomatoes - The average IMDb user score equates to 63/100 and the average Rotten Tomatoes score is 61/100. These are quite close, but in the details they are quite different. For example, when looking at horror films, on IMDb they average 58/100 whereas on Rotten Tomatoes it’s 49/100.

Film Critics: Metacritic versus Tomatometer - The rival film critics’ scoring websites are in a similar situation, with close overall averages (53/100 on Metacritic and 51/100 on Tomatometer) but wildly different scores under the surface. (The average ‘Unrated’ film received 59/100 at Metacritic but just 35/100 on the Tomatometer).

Each system is valid but they should not be compared with one another.

Limitations

There are a number of limitations to this research, including…

Reliance on third parties -The data for today’s article came from a number of sources. I used IMDb, Rotten Tomatoes, Metacritic, Opus and The Numbers. This means that all of my data has been filtered to some degree by a third party, rather than being collected directly from source by me.

Films selected via Domestic BO - The 2,000 films I studied were the highest grossing at the domestic box office. This was because international box office figures become more unreliable as we look further back in time and at films lower down the box office chart.

Selection bias - This is at almost every level; only certain types of users vote online, only certain film critics are included in the aggregated film critics’ scores (some are also given added weighting by Rotten Tomatoes and Metacritic) and I also manually excluded films with an extremely small number of votes (under 100 for user votes and under 10 for critics).

Companies - The film industry involves a large number of specialised companies working together to bring films to audiences. There may be a few production companies, backed by a Hollywood studio who then pass the film on to a different distributor in each country and sometimes different distributors for different media (i.e cinema, DVD, TV and VOD rights). In the ‘companies’ section of this research I chose to focus on US distributors who had distributed at least 10 films. Given unlimited resources, I would have preferred to study the originating production company as I feel they have a larger impact on the quality of the final film. Secondly, some films were released through joint arrangements between two distribution companies. I excluded these films from the company results I don’t have the knowledge to be able to judge the principal company in each case. They accounted for a small number of films and so wouldn’t massively skew the results. Finally, some companies have been bought out by others and some have been closed down. I have used the names of the companies at the point the film was distributed, meaning that Miramax makes an appearance, as do both USA Films and Focus Features, despite the fact they are now the same company.

Epilogue

This is yet another topic where I got a bit carried away. It was fun to get a window into the tastes of different groups and to spot patterns. I have a second part to this research, which I shall share in the coming weeks. If you have a question you want me to answer with film data then please contact me. And if you work for IMDb - I’m serious about the Showgirls Blu-ray.