Defining the average screenplay, via data on 12,000+ scripts

Last week, I published my analysis of 12,309 feature film screenplays and the scores they each received from professional script readers.

A byproduct of that research was that I had a large number of data points on a whole bunch of screenplays. This allowed me to look at what the average screenplay contains.

Hopefully, this research will prove useful to writers, producers and directors looking to understand what a typical screenplay looks like and a benchmark against which they can assess their own work.

All of these scripts were reviewed by professional script readers, either as a part of a screenplay competition or to create a script report. The vast majority of these scripts will not have been produced into movies yet and a large number of the screenwriters will still be at entry level, rather than professional writers. That being said, within the dataset are scripts which have won awards, been optioned by established producers and been written by professionals and Hollywood stars.

In this article, I'll share what the typical feature film screenplay contains with regard to seven topics:

Number of pages

Level of swearing

Gender-skewed genres (and who writes female characters)

Number of speaking characters

Number of scenes

Location places and times of day

Age of primary characters

1. Number of pages

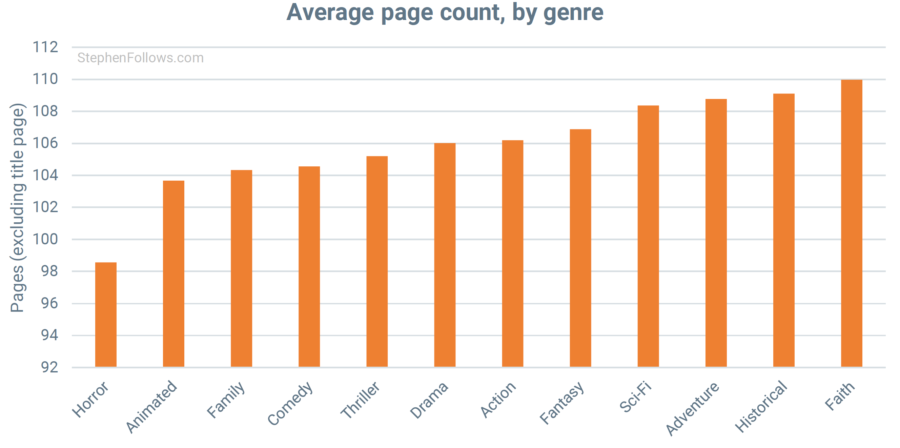

The median length across all of our scripts was 106 pages. However, there was a broad spectrum of lengths, with 68.5% of screenplays running between 90 and 120 pages long. As the chart below shows, there are spikes on round numbers; namely pages 90, 100, 110 and 120.

Horror scripts are the shortest, with an average page count of 98.6 while the longest were Faith scripts at 110.0 pages.

2. Level of Swearing

Warning: The charts contain uncensored uses of bad words. If this is not your thing, then skip to the next sub-section.

Almost four out of five scripts contained the word 's**t', with two-thirds featuring 'f**k' and just under one in ten using the word 'c**t'.

Although more scripts feature one 's**t' than one 'f**k', when a ‘f**k’ does appear it tends to be used more frequently than ‘s**t’. Across all our scripts, ‘s**t’ is used an average of 13.2 times, ‘f**k’ 23.9 times and ‘c**t’ 2.1 times.

Unsurprisingly, the swear words were not spread equally across all scripts. I developed a swearing score, based on the frequency of the three swear words I tracked, awarding a '1' for each use of ‘s**t’, '1.17' for ‘f**k’ and '8.51' for ‘c**t’.

Comedies are the sweariest, beating Action and Horror scripts by a tiny margin (Comedy scores 42.8, Action scores 42.5 and Horror scores 41.8). The genres featuring the lowest levels of swearing are Family (1.2), Animated (1.3) and Faith-based scripts (2.8).

Only sixteen scripts used ‘c**t’ without also using either ‘s**t’ or ‘f**k’ at least once.

3. Gender-skewed genres (and who writes female characters)

I have written at length in the past about gender inequality in the film industry, and so I won't discuss the topic in detail here. However, it is interesting to note how the gender split changes between different genres of scripts in the dataset.

The most male-dominated genres are Action (in which 8.4% of writers were women), Sci-Fi (14.1%) and Horror (14.5%). Women were best represented within Faith (47.2% female), Family scripts (41.5% female) and Animated (39.1%).

An interesting finding in last week's research was that when we look at the scores given by readers, there seems to be an advantage to writing in a genre dominated by another gender.

For example, Action is male-dominated but is also a genre in which female writers outperform their male counterparts by the second-largest margin. Likewise, Family films written by men received higher ratings than those by women.

My reading is that when it’s harder to write a certain genre (either due to internal barriers like conventions or external barriers like prejudice) the writers who make it through are, by definition, the most tenacious and dedicated. This means that in a genre where there are few women (such as Action) the writers that are there tend to be better than the average man in the same genre.

As well as tracking the gender of the writers, I also looked at the gender of the major characters of each script (where it was possible to do so).

In all but one genre, female screenwriters were more likely to create female leading characters. This was particularly pronounced in Historical films, where female characters in male-penned scripts account for only 39% of leading characters whereas the figure was 74% for scripts written by women.

This neatly illustrates one of the many reasons why gender inequality within the film industry can have negative outcomes. As well as basic fairness and equal opportunities, we also have to consider what characters we are seeing in movies. Culture can be defined as the stories we tell ourselves about ourselves, and so an overly-male writing community is likely to lead to a culture which overemphasises the plight of male characters, thereby undervaluing female characters, stories and perspectives.

4. Number of characters

The dataset allowed me to look at the number of unique characters who speak in each script, from our principal hero/heroine right through to background characters with single perfunctory lines.

Historical scripts have the greatest number of speaking characters (an average of 45.7) and Horror scripts have the fewest (25.8). Sadly, I was unable to track how many of those characters were still alive by the final page.

5. Number of Scenes

The average script has 110 scenes – just over one scene per page. Action scripts have the greatest number of scenes (an average of 131.2 scenes) with Comedies having the fewest (just 98.5).

6. Location places and times of day

Each scene heading starts with an indication as to whether the scene takes place inside (“INT” for interior), outside (“EXT” for exterior) or a hybrid (“INT/EXT”).

Across all scripts, 60.2% of scenes are interiors, 38.9% are exteriors and 0.9% are hybrid locations.

Westerns are mostly set outside, with 64.4% of their scenes taking place in exterior locations. At the opposite end of the scale, we see 65.2% of Comedy scenes taking place indoors.

Something that will make producers wince is that the average location only appears in 1.5 scenes.

58.3% of scenes take place during the day and 41.7% take place at night. Perhaps unsurprisingly, Horror scripts are much more likely to be set at night (56.5% of scenes) whereas Historical scripts are the most nyctophobic, with only 28.9% taking place at night.

7. Age of primary characters

The average specific age of the top five characters across all the scripts is 31.8 years old.

The character who speaks most often is typically a little younger (average age: 28.3) and as we move down to characters who speak less frequently the age increases slightly. The average age of the fifth most frequently-speaking character is 35.4.

The median age is 30 years old, with 15.4% of all characters being listed as exactly 30.

Notes

Today's research is riding on the coat-tails of my 'Judging Screenplays By Their Coverage' report and so comes with the same notes, definitions and caveats.

I would suggest either reading last week's article or the full 67-page report for details. This is particularly relevant to explain our methodologies on complicated topics such as gender.